注意

点击 这里 下载完整示例代码

使用 Tacotron2 进行文本转语音¶

import IPython

import matplotlib

import matplotlib.pyplot as plt

概述¶

本教程展示了如何使用 torchaudio 中预训练的 Tacotron2 构建文本转语音流程。

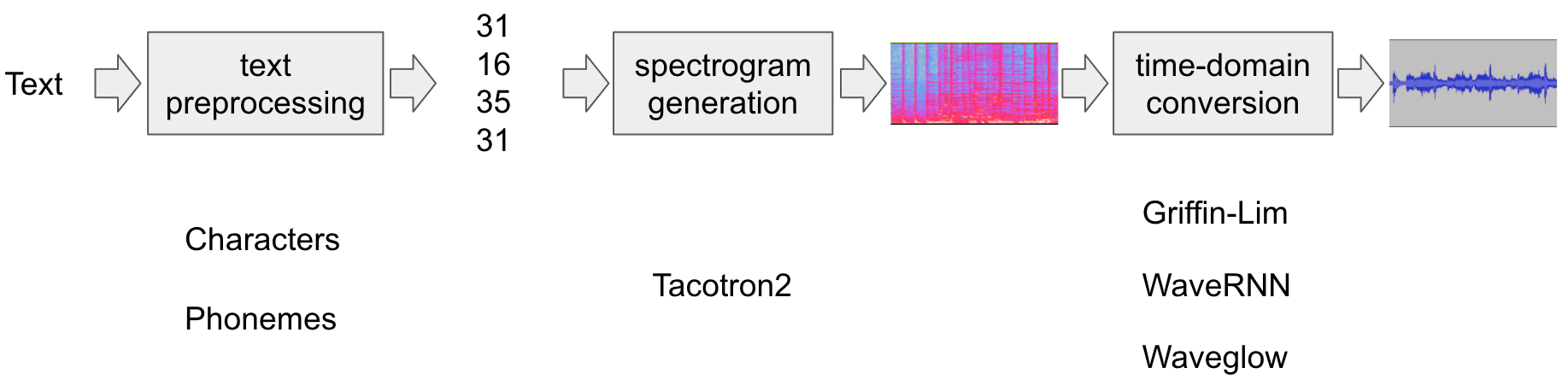

文本转语音流程如下:

文本预处理

首先,输入文本被编码为符号列表。在本教程中,我们将使用英文字符和音素作为符号。

频谱图生成

从编码文本生成频谱图。我们使用

Tacotron2模型来完成此操作。时域转换

最后一步是将频谱图转换为波形。从频谱图生成语音的过程也称为声码器(Vocoder)。在本教程中,使用了三种不同的声码器:

WaveRNN、GriffinLim以及 Nvidia 的 WaveGlow。

下图展示了整个流程。

所有相关组件都打包在 torchaudio.pipelines.Tacotron2TTSBundle 中,

但本教程也将涵盖其背后的处理过程。

准备¶

首先,我们安装必要的依赖项。除了

torchaudio,还需要 DeepPhonemizer 来执行基于音素的编码。

%%bash

pip3 install deep_phonemizer

import torch

import torchaudio

matplotlib.rcParams["figure.figsize"] = [16.0, 4.8]

torch.random.manual_seed(0)

device = "cuda" if torch.cuda.is_available() else "cpu"

print(torch.__version__)

print(torchaudio.__version__)

print(device)

2.0.0

2.0.1

cpu

文本处理¶

基于字符的编码¶

在本节中,我们将介绍基于字符的编码是如何工作的。

由于预训练的 Tacotron2 模型需要特定的符号表集,因此提供了与 torchaudio 中相同的功能。本节主要用于解释编码的基础知识。

首先,我们定义符号集。例如,我们可以使用

'_-!\'(),.:;? abcdefghijklmnopqrstuvwxyz'。然后,我们将输入文本中的每个字符映射到表中对应符号的索引。

以下是此类处理的一个示例。在该示例中,表中未出现的符号将被忽略。

[19, 16, 23, 23, 26, 11, 34, 26, 29, 23, 15, 2, 11, 31, 16, 35, 31, 11, 31, 26, 11, 30, 27, 16, 16, 14, 19, 2]

如上所述,符号表和索引必须与预训练的 Tacotron2 模型所期望的一致。torchaudio 提供了该转换以及预训练模型。例如,您可以实例化并使用此类转换,如下所示。

tensor([[19, 16, 23, 23, 26, 11, 34, 26, 29, 23, 15, 2, 11, 31, 16, 35, 31, 11,

31, 26, 11, 30, 27, 16, 16, 14, 19, 2]])

tensor([28], dtype=torch.int32)

The processor 对象接受文本或文本列表作为输入。

当提供文本列表时,返回的 lengths 变量

表示输出批次中每个处理过的标记的有效长度。

中间表示可按以下方式检索。

['h', 'e', 'l', 'l', 'o', ' ', 'w', 'o', 'r', 'l', 'd', '!', ' ', 't', 'e', 'x', 't', ' ', 't', 'o', ' ', 's', 'p', 'e', 'e', 'c', 'h', '!']

基于音素的编码¶

基于音素的编码与基于字符的编码类似,但它使用基于音素的符号表和一个 G2P(字形到音素)模型。

G2P 模型的细节超出了本教程的范围,我们仅查看转换后的效果。

与基于字符的编码情况类似,编码过程应与预训练的 Tacotron2 模型所训练的内容相匹配。

torchaudio 提供了一个用于创建该过程的接口。

以下代码说明了如何创建和使用该流程。在后台,使用 DeepPhonemizer 包创建一个 G2P 模型,并获取 DeepPhonemizer 的作者发布的预训练权重。

0%| | 0.00/63.6M [00:00<?, ?B/s]

0%| | 48.0k/63.6M [00:00<04:05, 272kB/s]

0%| | 248k/63.6M [00:00<01:25, 776kB/s]

1%|1 | 808k/63.6M [00:00<00:31, 2.12MB/s]

3%|3 | 2.03M/63.6M [00:00<00:13, 4.74MB/s]

7%|7 | 4.67M/63.6M [00:00<00:06, 10.1MB/s]

11%|# | 6.80M/63.6M [00:00<00:04, 12.0MB/s]

15%|#5 | 9.70M/63.6M [00:01<00:03, 15.4MB/s]

19%|#8 | 12.0M/63.6M [00:01<00:03, 15.9MB/s]

23%|##3 | 14.9M/63.6M [00:01<00:02, 18.1MB/s]

27%|##7 | 17.4M/63.6M [00:01<00:02, 18.3MB/s]

32%|###2 | 20.4M/63.6M [00:01<00:02, 19.8MB/s]

36%|###6 | 23.1M/63.6M [00:01<00:02, 20.8MB/s]

40%|###9 | 25.2M/63.6M [00:01<00:01, 20.7MB/s]

44%|####3 | 27.8M/63.6M [00:01<00:01, 22.5MB/s]

48%|####7 | 30.4M/63.6M [00:02<00:01, 22.1MB/s]

52%|#####2 | 33.4M/63.6M [00:02<00:01, 24.4MB/s]

56%|#####6 | 35.8M/63.6M [00:02<00:01, 22.8MB/s]

60%|###### | 38.5M/63.6M [00:02<00:01, 24.4MB/s]

65%|######5 | 41.5M/63.6M [00:02<00:00, 24.9MB/s]

70%|######9 | 44.3M/63.6M [00:02<00:00, 26.2MB/s]

74%|#######4 | 47.1M/63.6M [00:02<00:00, 25.8MB/s]

78%|#######8 | 49.6M/63.6M [00:02<00:00, 25.7MB/s]

82%|########2 | 52.5M/63.6M [00:02<00:00, 26.8MB/s]

88%|########7 | 56.0M/63.6M [00:03<00:00, 27.8MB/s]

92%|#########2| 58.8M/63.6M [00:03<00:00, 28.5MB/s]

98%|#########7| 62.2M/63.6M [00:03<00:00, 28.8MB/s]

100%|##########| 63.6M/63.6M [00:03<00:00, 20.3MB/s]

tensor([[54, 20, 65, 69, 11, 92, 44, 65, 38, 2, 11, 81, 40, 64, 79, 81, 11, 81,

20, 11, 79, 77, 59, 37, 2]])

tensor([25], dtype=torch.int32)

请注意,编码后的值与基于字符的编码示例不同。

中间表示形式如下所示。

['HH', 'AH', 'L', 'OW', ' ', 'W', 'ER', 'L', 'D', '!', ' ', 'T', 'EH', 'K', 'S', 'T', ' ', 'T', 'AH', ' ', 'S', 'P', 'IY', 'CH', '!']

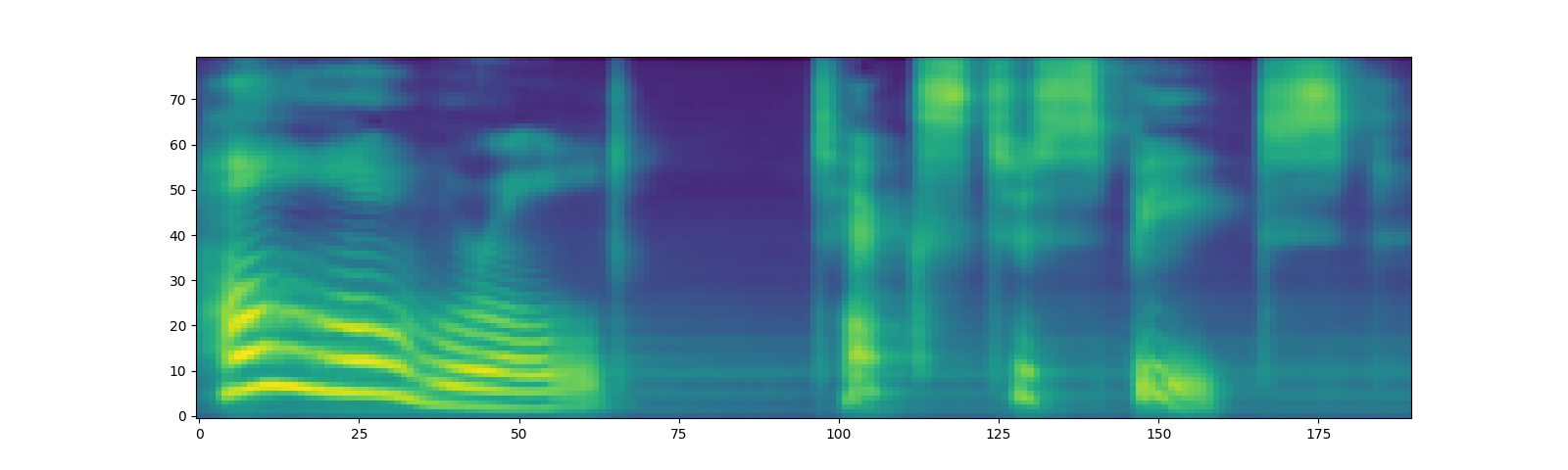

频谱图生成¶

Tacotron2 是我们用来从编码文本生成频谱图的模型。有关该模型的详细信息,请参阅 论文。

使用预训练权重实例化 Tacotron2 模型非常简单,但请注意,输入到 Tacotron2 模型的数据需要经过匹配文本处理器的处理。

torchaudio.pipelines.Tacotron2TTSBundle 将匹配的模型和处理器捆绑在一起,以便轻松创建流水线。

有关可用的捆绑包及其用法,请参阅

Tacotron2TTSBundle。

bundle = torchaudio.pipelines.TACOTRON2_WAVERNN_PHONE_LJSPEECH

processor = bundle.get_text_processor()

tacotron2 = bundle.get_tacotron2().to(device)

text = "Hello world! Text to speech!"

with torch.inference_mode():

processed, lengths = processor(text)

processed = processed.to(device)

lengths = lengths.to(device)

spec, _, _ = tacotron2.infer(processed, lengths)

_ = plt.imshow(spec[0].cpu().detach(), origin="lower", aspect="auto")

Downloading: "https://download.pytorch.org/torchaudio/models/tacotron2_english_phonemes_1500_epochs_wavernn_ljspeech.pth" to /root/.cache/torch/hub/checkpoints/tacotron2_english_phonemes_1500_epochs_wavernn_ljspeech.pth

0%| | 0.00/107M [00:00<?, ?B/s]

37%|###6 | 39.6M/107M [00:00<00:00, 415MB/s]

74%|#######3 | 79.2M/107M [00:00<00:00, 390MB/s]

100%|##########| 107M/107M [00:00<00:00, 380MB/s]

请注意,Tacotron2.infer 方法执行多项式采样,因此生成频谱图的过程会引入随机性。

torch.Size([80, 155])

torch.Size([80, 167])

torch.Size([80, 164])

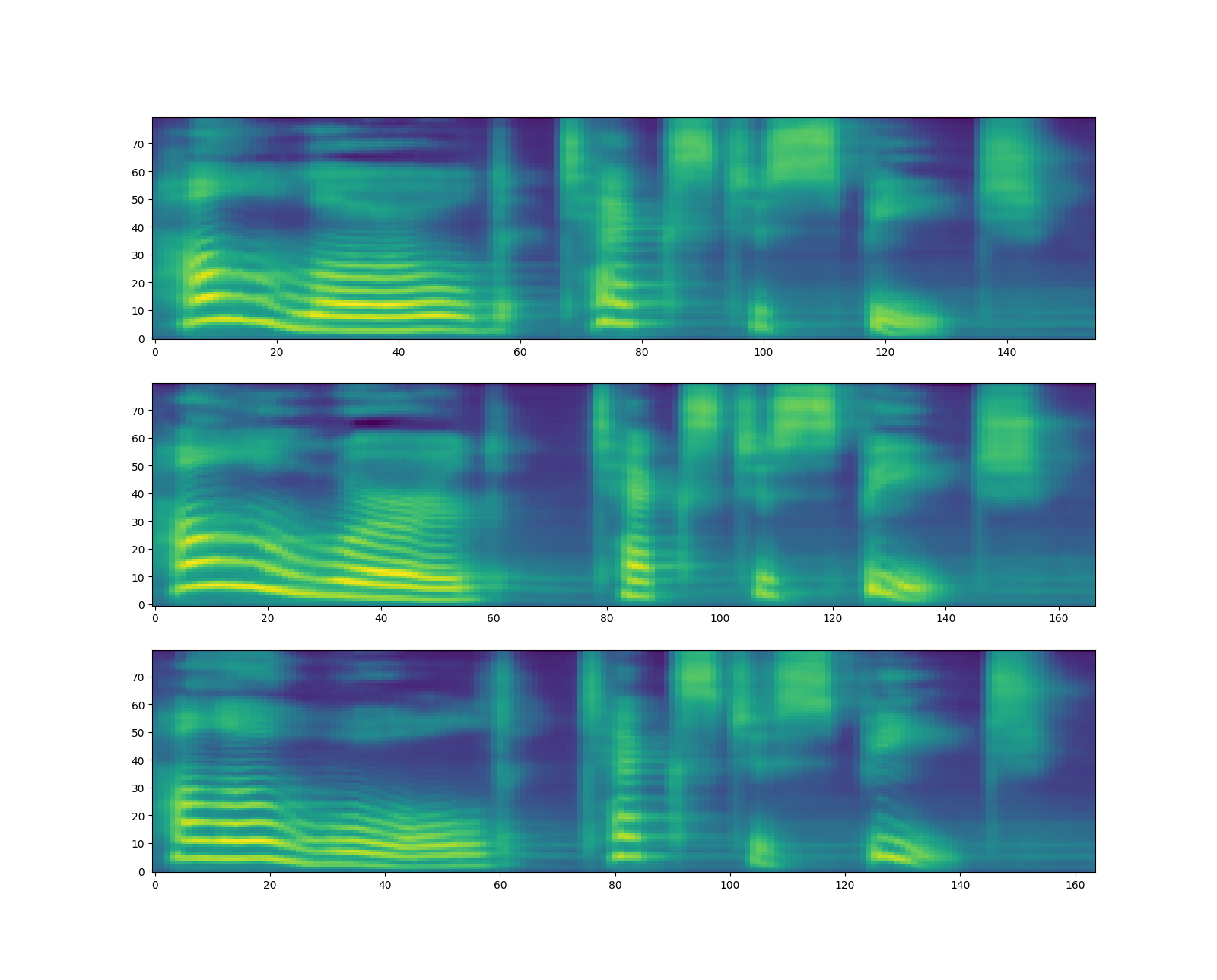

波形生成¶

生成频谱图后,最后一步是从频谱图中恢复波形。

torchaudio 提供基于 GriffinLim 和

WaveRNN 的声码器。

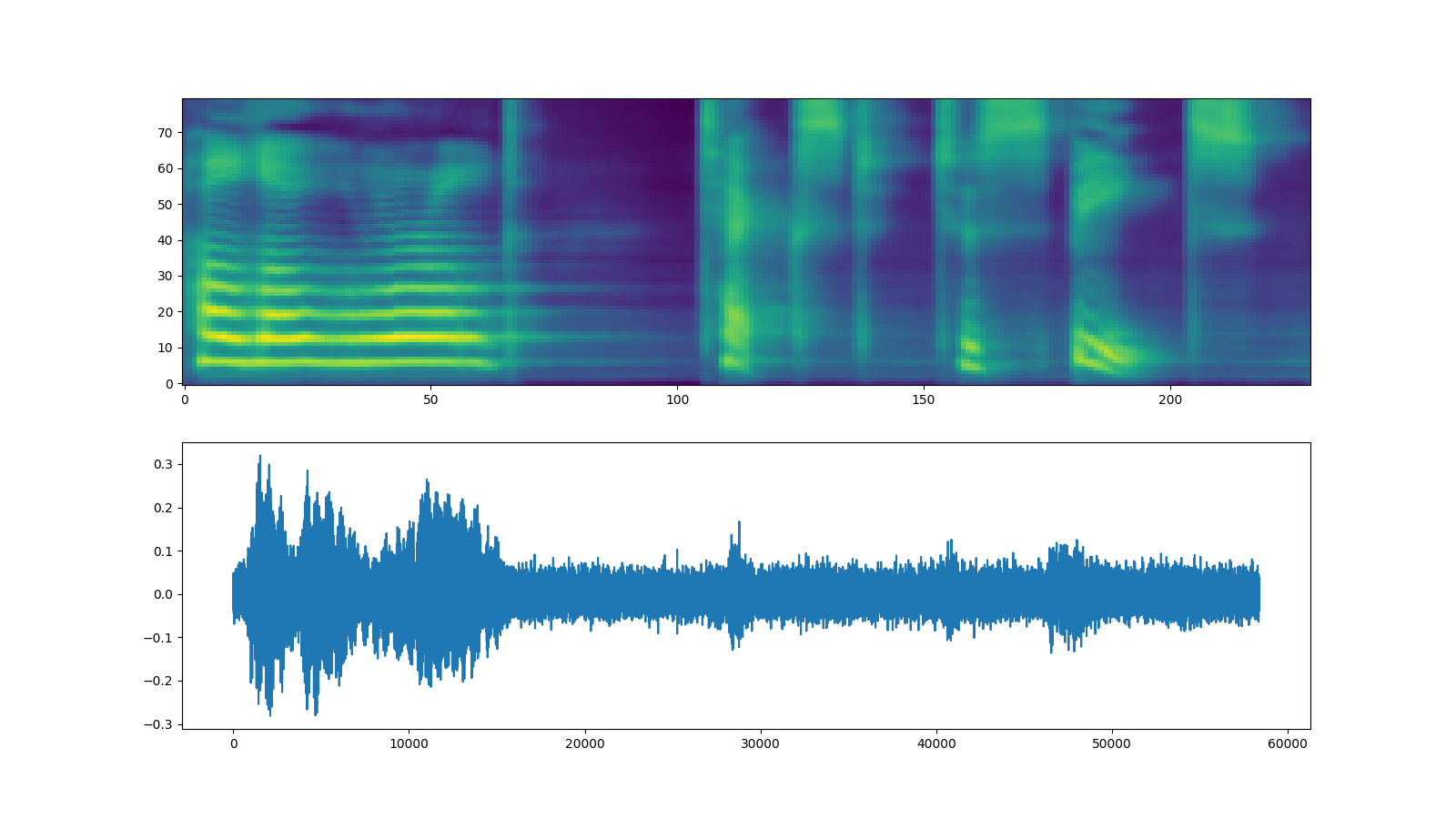

WaveRNN¶

承接上一节,我们可以从同一个捆绑包中实例化匹配的 WaveRNN 模型。

bundle = torchaudio.pipelines.TACOTRON2_WAVERNN_PHONE_LJSPEECH

processor = bundle.get_text_processor()

tacotron2 = bundle.get_tacotron2().to(device)

vocoder = bundle.get_vocoder().to(device)

text = "Hello world! Text to speech!"

with torch.inference_mode():

processed, lengths = processor(text)

processed = processed.to(device)

lengths = lengths.to(device)

spec, spec_lengths, _ = tacotron2.infer(processed, lengths)

waveforms, lengths = vocoder(spec, spec_lengths)

fig, [ax1, ax2] = plt.subplots(2, 1, figsize=(16, 9))

ax1.imshow(spec[0].cpu().detach(), origin="lower", aspect="auto")

ax2.plot(waveforms[0].cpu().detach())

IPython.display.Audio(waveforms[0:1].cpu(), rate=vocoder.sample_rate)

Downloading: "https://download.pytorch.org/torchaudio/models/wavernn_10k_epochs_8bits_ljspeech.pth" to /root/.cache/torch/hub/checkpoints/wavernn_10k_epochs_8bits_ljspeech.pth

0%| | 0.00/16.7M [00:00<?, ?B/s]

100%|##########| 16.7M/16.7M [00:00<00:00, 322MB/s]

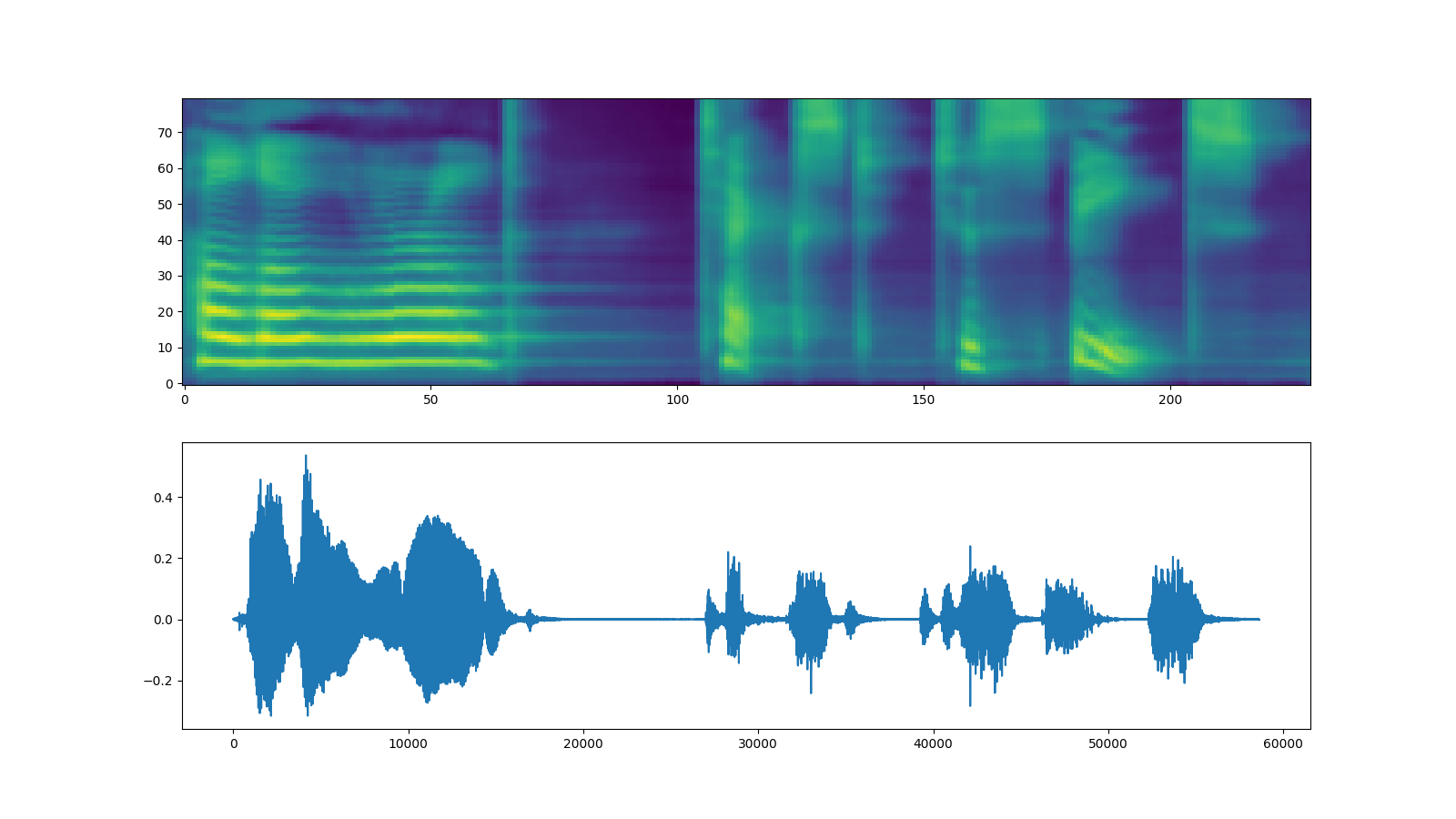

Griffin-Lim¶

使用 Griffin-Lim 声码器与 WaveRNN 相同。您可以实例化

声码器对象,使用

get_vocoder()

方法并传入频谱图。

bundle = torchaudio.pipelines.TACOTRON2_GRIFFINLIM_PHONE_LJSPEECH

processor = bundle.get_text_processor()

tacotron2 = bundle.get_tacotron2().to(device)

vocoder = bundle.get_vocoder().to(device)

with torch.inference_mode():

processed, lengths = processor(text)

processed = processed.to(device)

lengths = lengths.to(device)

spec, spec_lengths, _ = tacotron2.infer(processed, lengths)

waveforms, lengths = vocoder(spec, spec_lengths)

fig, [ax1, ax2] = plt.subplots(2, 1, figsize=(16, 9))

ax1.imshow(spec[0].cpu().detach(), origin="lower", aspect="auto")

ax2.plot(waveforms[0].cpu().detach())

IPython.display.Audio(waveforms[0:1].cpu(), rate=vocoder.sample_rate)

Downloading: "https://download.pytorch.org/torchaudio/models/tacotron2_english_phonemes_1500_epochs_ljspeech.pth" to /root/.cache/torch/hub/checkpoints/tacotron2_english_phonemes_1500_epochs_ljspeech.pth

0%| | 0.00/107M [00:00<?, ?B/s]

39%|###9 | 42.3M/107M [00:00<00:00, 444MB/s]

79%|#######8 | 84.7M/107M [00:00<00:00, 380MB/s]

100%|##########| 107M/107M [00:00<00:00, 398MB/s]

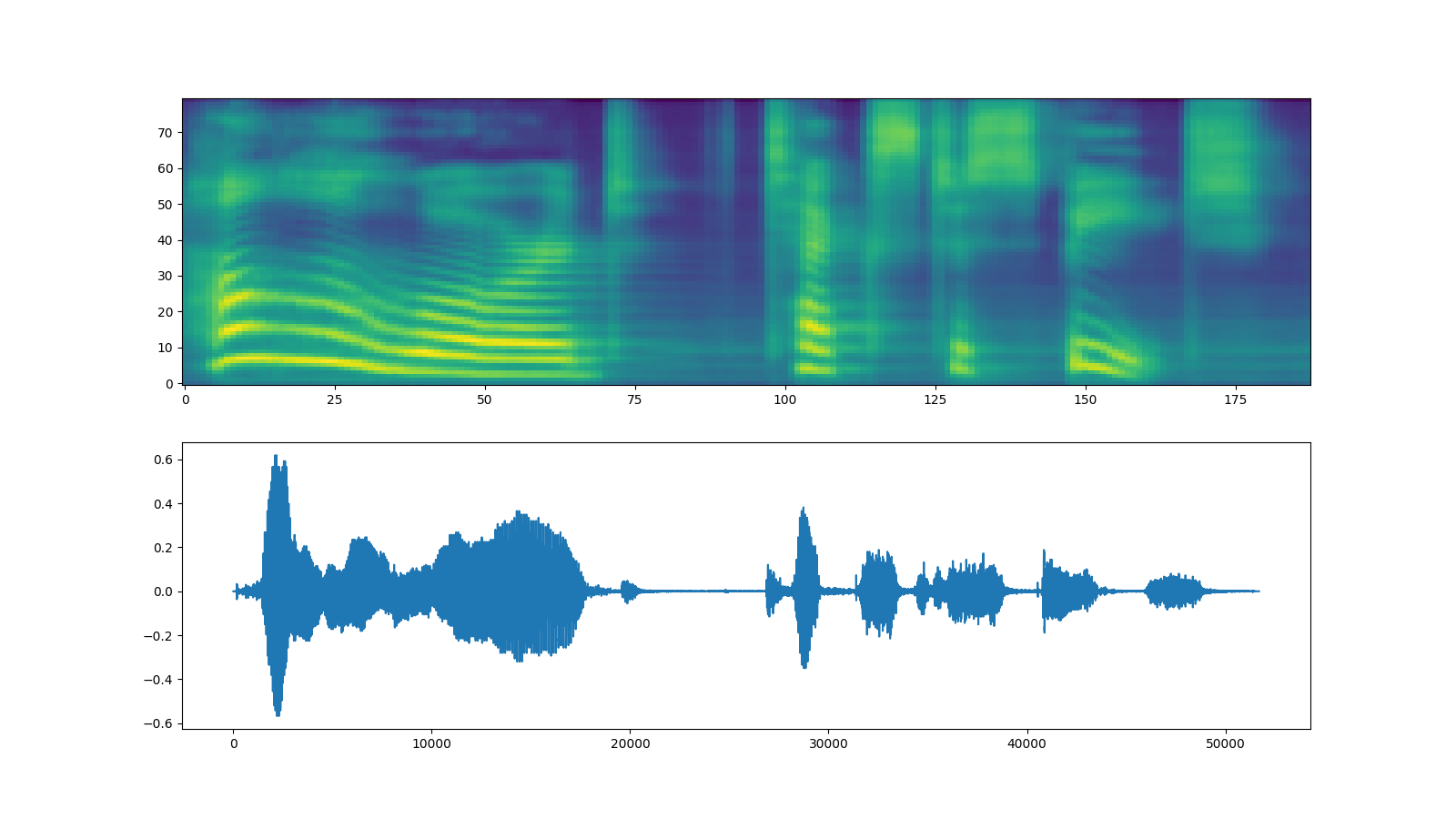

Waveglow¶

Waveglow 是由 Nvidia 发布的声码器。预训练权重已发布在 Torch Hub 上。可以使用 torch.hub 模块实例化该模型。

# Workaround to load model mapped on GPU

# https://stackoverflow.com/a/61840832

waveglow = torch.hub.load(

"NVIDIA/DeepLearningExamples:torchhub",

"nvidia_waveglow",

model_math="fp32",

pretrained=False,

)

checkpoint = torch.hub.load_state_dict_from_url(

"https://api.ngc.nvidia.com/v2/models/nvidia/waveglowpyt_fp32/versions/1/files/nvidia_waveglowpyt_fp32_20190306.pth", # noqa: E501

progress=False,

map_location=device,

)

state_dict = {key.replace("module.", ""): value for key, value in checkpoint["state_dict"].items()}

waveglow.load_state_dict(state_dict)

waveglow = waveglow.remove_weightnorm(waveglow)

waveglow = waveglow.to(device)

waveglow.eval()

with torch.no_grad():

waveforms = waveglow.infer(spec)

fig, [ax1, ax2] = plt.subplots(2, 1, figsize=(16, 9))

ax1.imshow(spec[0].cpu().detach(), origin="lower", aspect="auto")

ax2.plot(waveforms[0].cpu().detach())

IPython.display.Audio(waveforms[0:1].cpu(), rate=22050)

/usr/local/envs/python3.8/lib/python3.8/site-packages/torch/hub.py:286: UserWarning: You are about to download and run code from an untrusted repository. In a future release, this won't be allowed. To add the repository to your trusted list, change the command to {calling_fn}(..., trust_repo=False) and a command prompt will appear asking for an explicit confirmation of trust, or load(..., trust_repo=True), which will assume that the prompt is to be answered with 'yes'. You can also use load(..., trust_repo='check') which will only prompt for confirmation if the repo is not already trusted. This will eventually be the default behaviour

warnings.warn(

Downloading: "https://github.com/NVIDIA/DeepLearningExamples/zipball/torchhub" to /root/.cache/torch/hub/torchhub.zip

/root/.cache/torch/hub/NVIDIA_DeepLearningExamples_torchhub/PyTorch/Classification/ConvNets/image_classification/models/common.py:13: UserWarning: pytorch_quantization module not found, quantization will not be available

warnings.warn(

/root/.cache/torch/hub/NVIDIA_DeepLearningExamples_torchhub/PyTorch/Classification/ConvNets/image_classification/models/efficientnet.py:17: UserWarning: pytorch_quantization module not found, quantization will not be available

warnings.warn(

Downloading: "https://api.ngc.nvidia.com/v2/models/nvidia/waveglowpyt_fp32/versions/1/files/nvidia_waveglowpyt_fp32_20190306.pth" to /root/.cache/torch/hub/checkpoints/nvidia_waveglowpyt_fp32_20190306.pth

脚本的总运行时间: ( 2 分钟 40.567 秒)