注意

点击 这里 下载完整示例代码

音频数据增强¶

torchaudio 提供多种增强音频数据的方法。

在本教程中,我们将探讨如何应用效果、滤波器、 RIR(房间脉冲响应)和编解码器。

最后,我们从干净的语音中合成带有噪声的语音。

import torch

import torchaudio

import torchaudio.functional as F

print(torch.__version__)

print(torchaudio.__version__)

Out:

1.12.0

0.12.0

准备¶

首先,我们导入本教程中使用的模块并下载音频资源。

import math

from IPython.display import Audio

import matplotlib.pyplot as plt

from torchaudio.utils import download_asset

SAMPLE_WAV = download_asset("tutorial-assets/steam-train-whistle-daniel_simon.wav")

SAMPLE_RIR = download_asset("tutorial-assets/Lab41-SRI-VOiCES-rm1-impulse-mc01-stu-clo-8000hz.wav")

SAMPLE_SPEECH = download_asset("tutorial-assets/Lab41-SRI-VOiCES-src-sp0307-ch127535-sg0042-8000hz.wav")

SAMPLE_NOISE = download_asset("tutorial-assets/Lab41-SRI-VOiCES-rm1-babb-mc01-stu-clo-8000hz.wav")

Out:

0%| | 0.00/427k [00:00<?, ?B/s]

100%|##########| 427k/427k [00:00<00:00, 67.1MB/s]

0%| | 0.00/31.3k [00:00<?, ?B/s]

100%|##########| 31.3k/31.3k [00:00<00:00, 11.5MB/s]

0%| | 0.00/78.2k [00:00<?, ?B/s]

100%|##########| 78.2k/78.2k [00:00<00:00, 20.9MB/s]

应用效果和过滤¶

torchaudio.sox_effects() 允许直接应用类似于

sox 中可用的过滤器到 Tensor 对象和文件对象音频源。

有两个函数可用于此目的:

torchaudio.sox_effects.apply_effects_tensor()用于将效果应用于 Tensor。torchaudio.sox_effects.apply_effects_file()用于对其他音频源应用效果。

这两个函数都接受形式为

List[List[str]]的效果定义。

这与 sox 命令的工作方式基本一致,但有一个注意事项是

sox 会自动添加一些效果,而 torchaudio 的

实现则不会。

有关可用效果的列表,请参阅sox文档。

提示 如果您需要即时加载和重采样音频数据,

则可以使用 torchaudio.sox_effects.apply_effects_file()

配合效果器 "rate"。

注意 torchaudio.sox_effects.apply_effects_file() 接受类文件对象或类路径对象。

与 torchaudio.load() 类似,当无法从文件扩展名或文件头推断音频格式时,您可以提供参数 format 来指定音频源的格式。

注意 此过程不可微。

# Load the data

waveform1, sample_rate1 = torchaudio.load(SAMPLE_WAV)

# Define effects

effects = [

["lowpass", "-1", "300"], # apply single-pole lowpass filter

["speed", "0.8"], # reduce the speed

# This only changes sample rate, so it is necessary to

# add `rate` effect with original sample rate after this.

["rate", f"{sample_rate1}"],

["reverb", "-w"], # Reverbration gives some dramatic feeling

]

# Apply effects

waveform2, sample_rate2 = torchaudio.sox_effects.apply_effects_tensor(waveform1, sample_rate1, effects)

print(waveform1.shape, sample_rate1)

print(waveform2.shape, sample_rate2)

Out:

torch.Size([2, 109368]) 44100

torch.Size([2, 136710]) 44100

请注意,应用效果后,帧数和通道数与原始数据不同。让我们来听一下音频。

def plot_waveform(waveform, sample_rate, title="Waveform", xlim=None):

waveform = waveform.numpy()

num_channels, num_frames = waveform.shape

time_axis = torch.arange(0, num_frames) / sample_rate

figure, axes = plt.subplots(num_channels, 1)

if num_channels == 1:

axes = [axes]

for c in range(num_channels):

axes[c].plot(time_axis, waveform[c], linewidth=1)

axes[c].grid(True)

if num_channels > 1:

axes[c].set_ylabel(f"Channel {c+1}")

if xlim:

axes[c].set_xlim(xlim)

figure.suptitle(title)

plt.show(block=False)

def plot_specgram(waveform, sample_rate, title="Spectrogram", xlim=None):

waveform = waveform.numpy()

num_channels, _ = waveform.shape

figure, axes = plt.subplots(num_channels, 1)

if num_channels == 1:

axes = [axes]

for c in range(num_channels):

axes[c].specgram(waveform[c], Fs=sample_rate)

if num_channels > 1:

axes[c].set_ylabel(f"Channel {c+1}")

if xlim:

axes[c].set_xlim(xlim)

figure.suptitle(title)

plt.show(block=False)

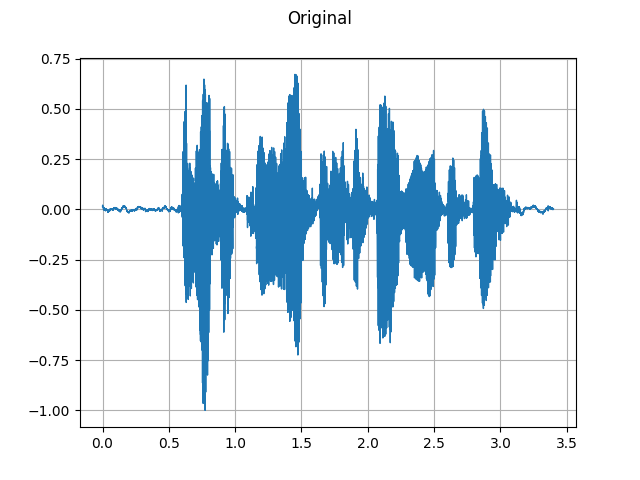

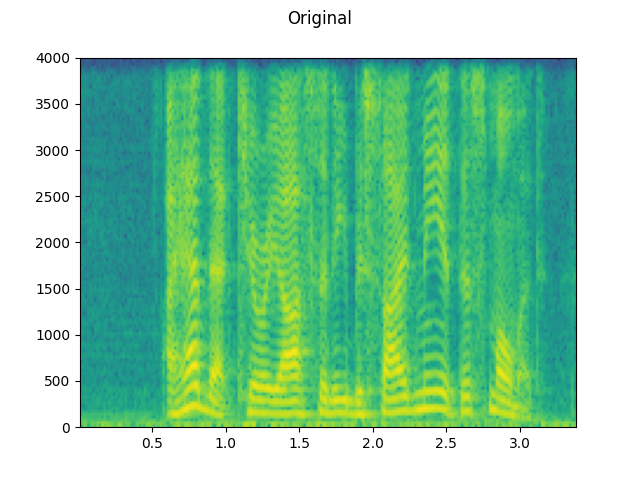

Original:¶

plot_waveform(waveform1, sample_rate1, title="Original", xlim=(-0.1, 3.2))

plot_specgram(waveform1, sample_rate1, title="Original", xlim=(0, 3.04))

Audio(waveform1, rate=sample_rate1)

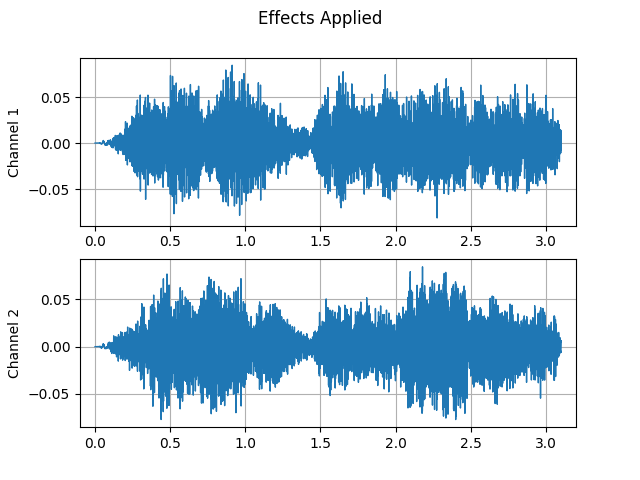

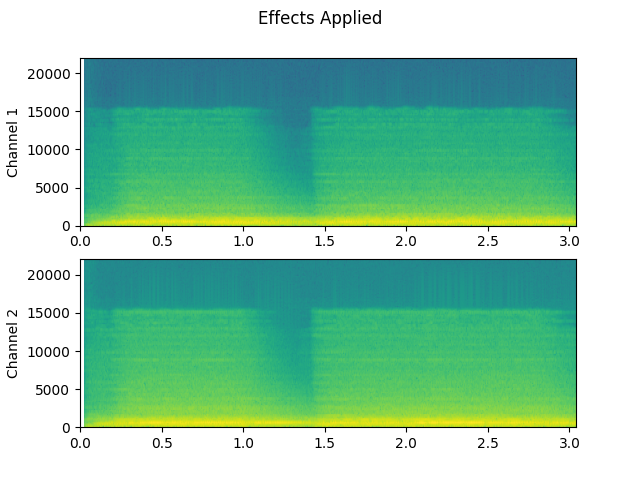

已应用的效果:¶

plot_waveform(waveform2, sample_rate2, title="Effects Applied", xlim=(-0.1, 3.2))

plot_specgram(waveform2, sample_rate2, title="Effects Applied", xlim=(0, 3.04))

Audio(waveform2, rate=sample_rate2)

听起来不是更戏剧化了吗?

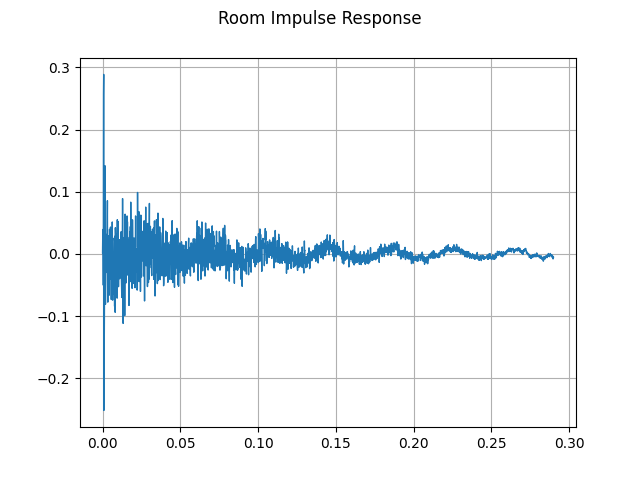

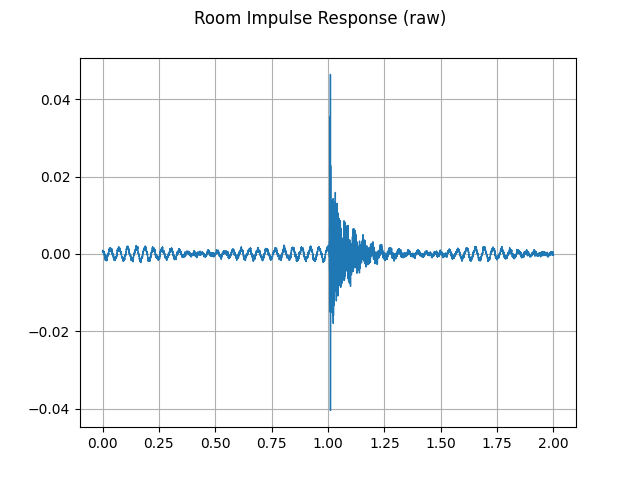

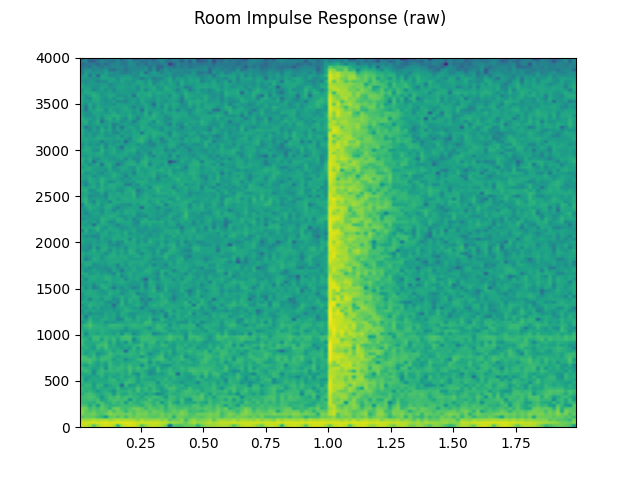

模拟房间混响¶

卷积混响 是一种用于使干净音频听起来像是在不同环境中生成的技术。

例如,使用房间脉冲响应(RIR),我们可以使清晰的语音听起来就像是在会议室中发出的一样。

为此过程,我们需要混响(RIR)数据。以下数据来自 VOiCES 数据集,但你也可以自己录制——只需打开麦克风并拍手即可。

rir_raw, sample_rate = torchaudio.load(SAMPLE_RIR)

plot_waveform(rir_raw, sample_rate, title="Room Impulse Response (raw)")

plot_specgram(rir_raw, sample_rate, title="Room Impulse Response (raw)")

Audio(rir_raw, rate=sample_rate)

首先,我们需要对 RIR 进行清理。我们提取主脉冲,归一化信号功率,然后沿时间轴翻转。

rir = rir_raw[:, int(sample_rate * 1.01) : int(sample_rate * 1.3)]

rir = rir / torch.norm(rir, p=2)

RIR = torch.flip(rir, [1])

plot_waveform(rir, sample_rate, title="Room Impulse Response")

然后,我们将语音信号与 RIR 滤波器进行卷积。

speech, _ = torchaudio.load(SAMPLE_SPEECH)

speech_ = torch.nn.functional.pad(speech, (RIR.shape[1] - 1, 0))

augmented = torch.nn.functional.conv1d(speech_[None, ...], RIR[None, ...])[0]

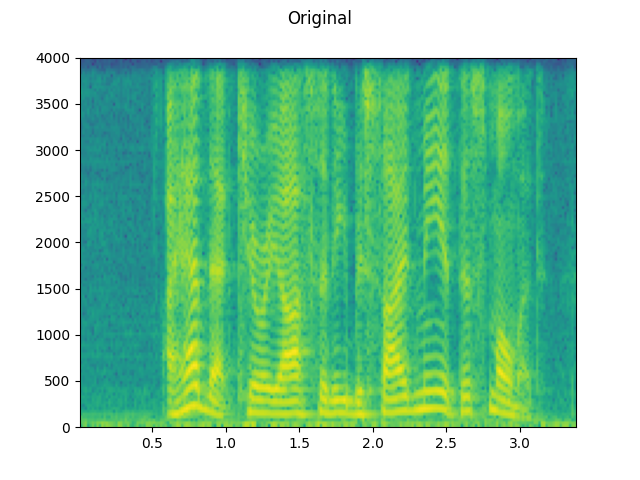

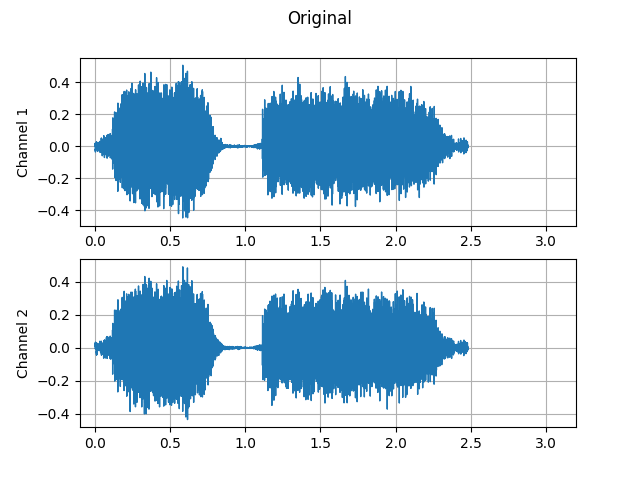

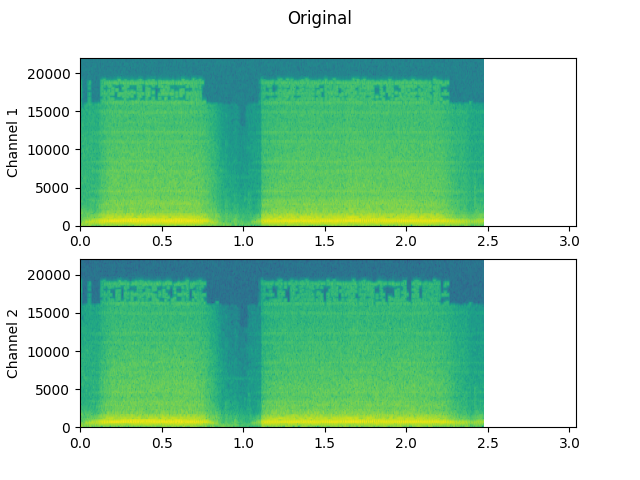

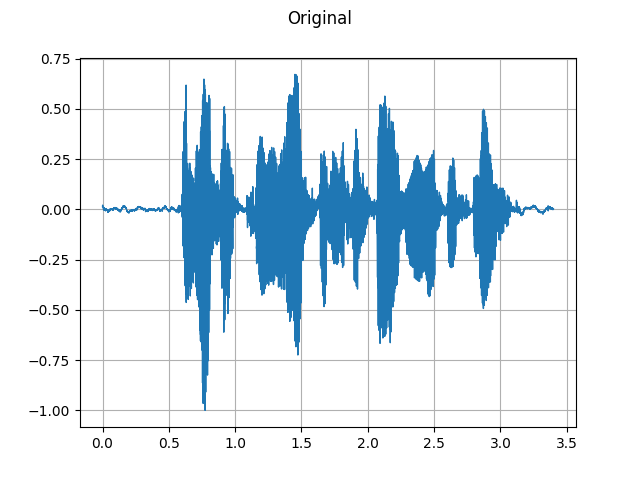

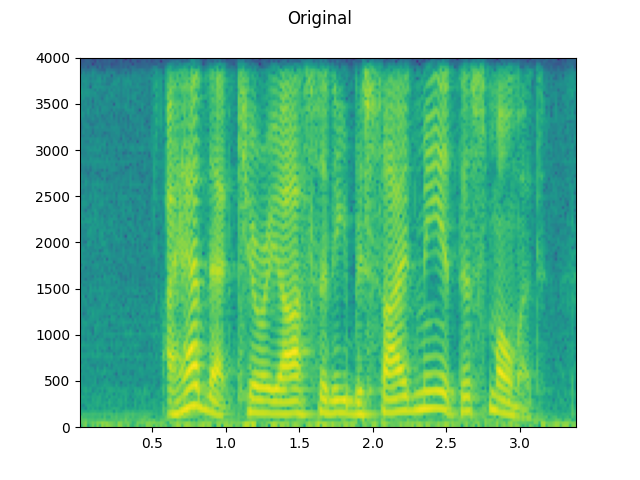

Original:¶

plot_waveform(speech, sample_rate, title="Original")

plot_specgram(speech, sample_rate, title="Original")

Audio(speech, rate=sample_rate)

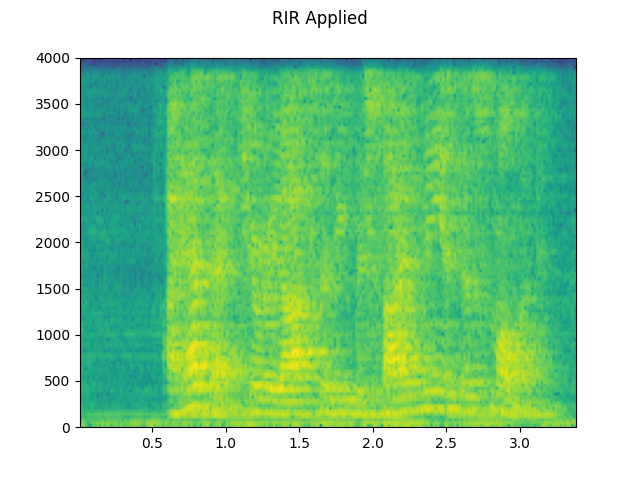

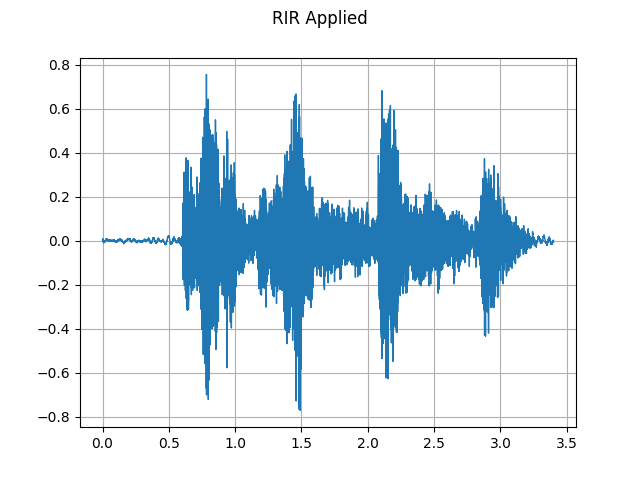

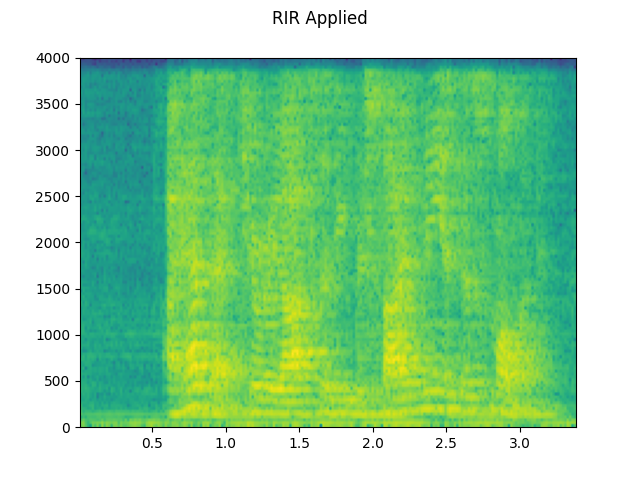

已应用RIR:¶

plot_waveform(augmented, sample_rate, title="RIR Applied")

plot_specgram(augmented, sample_rate, title="RIR Applied")

Audio(augmented, rate=sample_rate)

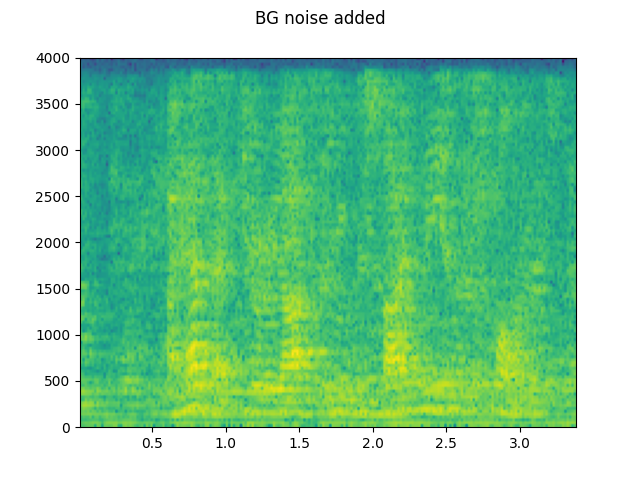

添加背景噪声¶

要向音频数据添加背景噪声,您可以简单地将噪声张量添加到表示音频数据的张量中。调整噪声强度的一种常见方法是更改信噪比 (SNR)。 [wikipedia]

$$ \mathrm{信噪比} = \frac{P_{信号}}{P_{噪声}} $$

$$ \mathrm{SNR_{dB}} = 10 \log _{{10}} \mathrm {SNR} $$

speech, _ = torchaudio.load(SAMPLE_SPEECH)

noise, _ = torchaudio.load(SAMPLE_NOISE)

noise = noise[:, : speech.shape[1]]

speech_power = speech.norm(p=2)

noise_power = noise.norm(p=2)

snr_dbs = [20, 10, 3]

noisy_speeches = []

for snr_db in snr_dbs:

snr = 10 ** (snr_db / 20)

scale = snr * noise_power / speech_power

noisy_speeches.append((scale * speech + noise) / 2)

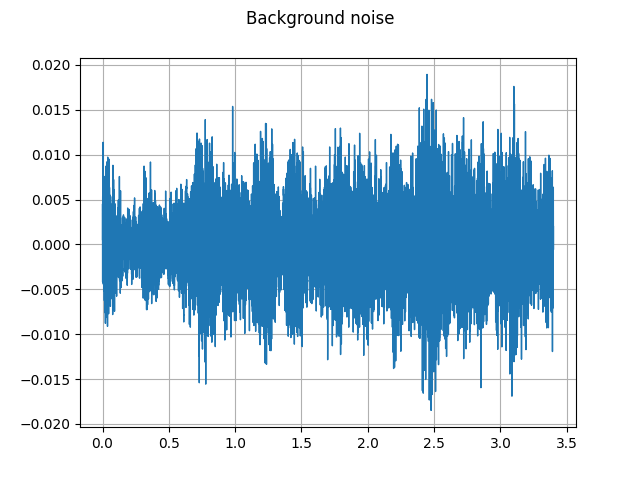

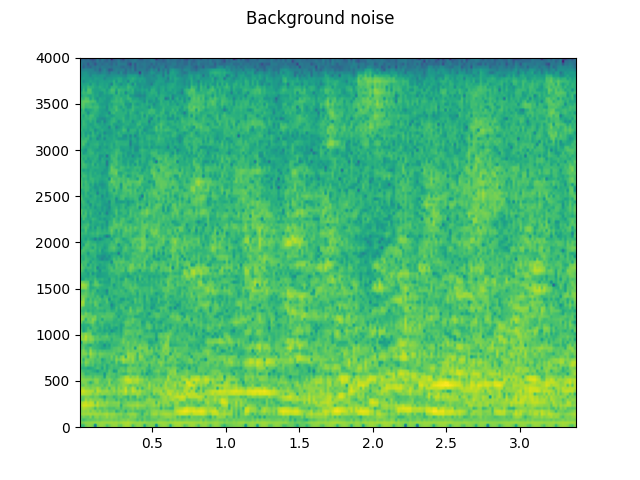

背景噪声:¶

plot_waveform(noise, sample_rate, title="Background noise")

plot_specgram(noise, sample_rate, title="Background noise")

Audio(noise, rate=sample_rate)

信噪比 20 dB:¶

snr_db, noisy_speech = snr_dbs[0], noisy_speeches[0]

plot_waveform(noisy_speech, sample_rate, title=f"SNR: {snr_db} [dB]")

plot_specgram(noisy_speech, sample_rate, title=f"SNR: {snr_db} [dB]")

Audio(noisy_speech, rate=sample_rate)

信噪比 10 dB:¶

snr_db, noisy_speech = snr_dbs[1], noisy_speeches[1]

plot_waveform(noisy_speech, sample_rate, title=f"SNR: {snr_db} [dB]")

plot_specgram(noisy_speech, sample_rate, title=f"SNR: {snr_db} [dB]")

Audio(noisy_speech, rate=sample_rate)

信噪比 3 dB:¶

snr_db, noisy_speech = snr_dbs[2], noisy_speeches[2]

plot_waveform(noisy_speech, sample_rate, title=f"SNR: {snr_db} [dB]")

plot_specgram(noisy_speech, sample_rate, title=f"SNR: {snr_db} [dB]")

Audio(noisy_speech, rate=sample_rate)

将编解码器应用于张量对象¶

torchaudio.functional.apply_codec() 可以将编解码器应用于

Tensor 对象。

注意 此过程不可微。

waveform, sample_rate = torchaudio.load(SAMPLE_SPEECH)

configs = [

{"format": "wav", "encoding": "ULAW", "bits_per_sample": 8},

{"format": "gsm"},

{"format": "vorbis", "compression": -1},

]

waveforms = []

for param in configs:

augmented = F.apply_codec(waveform, sample_rate, **param)

waveforms.append(augmented)

Original:¶

plot_waveform(waveform, sample_rate, title="Original")

plot_specgram(waveform, sample_rate, title="Original")

Audio(waveform, rate=sample_rate)

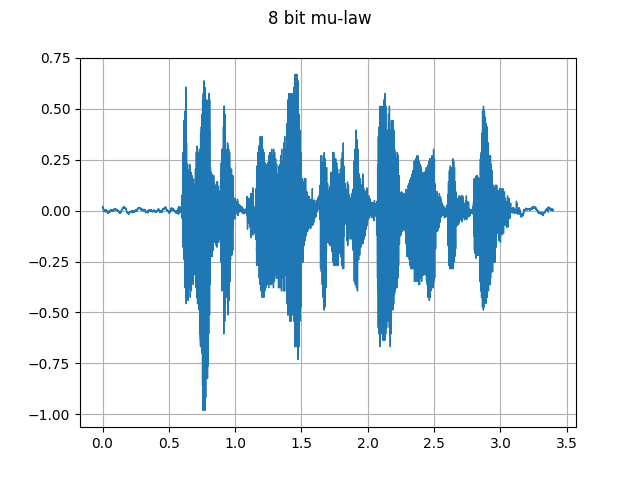

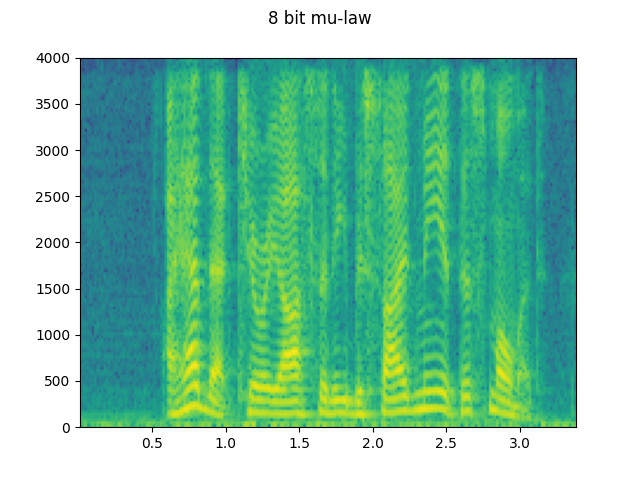

8 位 mu-law:¶

plot_waveform(waveforms[0], sample_rate, title="8 bit mu-law")

plot_specgram(waveforms[0], sample_rate, title="8 bit mu-law")

Audio(waveforms[0], rate=sample_rate)

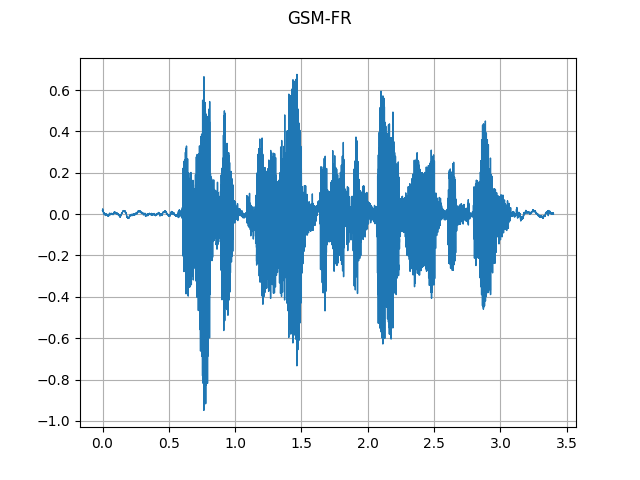

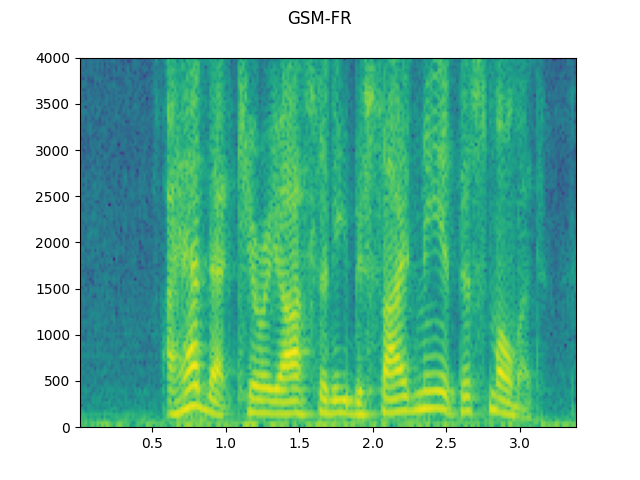

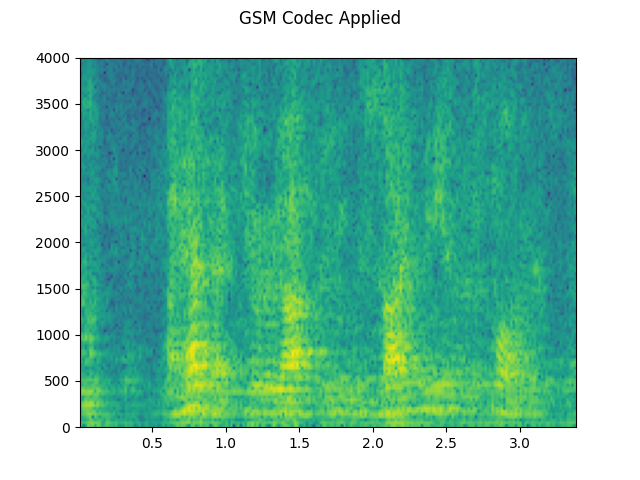

GSM-FR:¶

plot_waveform(waveforms[1], sample_rate, title="GSM-FR")

plot_specgram(waveforms[1], sample_rate, title="GSM-FR")

Audio(waveforms[1], rate=sample_rate)

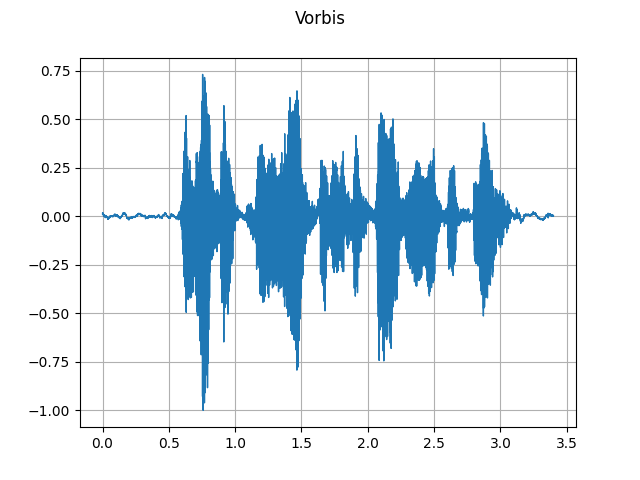

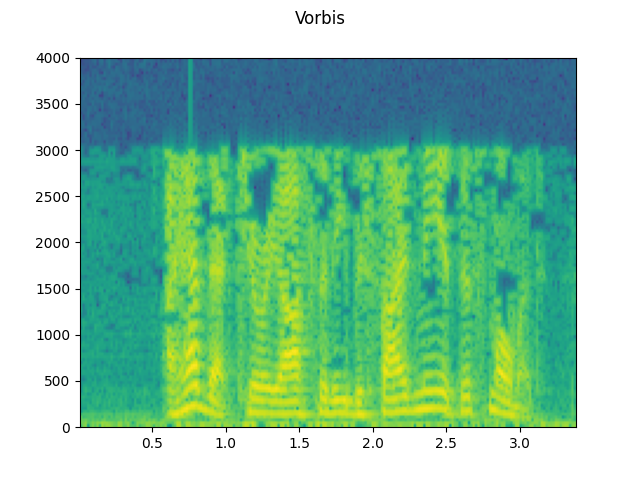

Vorbis:¶

plot_waveform(waveforms[2], sample_rate, title="Vorbis")

plot_specgram(waveforms[2], sample_rate, title="Vorbis")

Audio(waveforms[2], rate=sample_rate)

模拟手机录音¶

结合之前的技巧,我们可以模拟出听起来像一个人在回声较大的房间中通过电话讲话的音频,同时还有背景中人们交谈的声音。

sample_rate = 16000

original_speech, sample_rate = torchaudio.load(SAMPLE_SPEECH)

plot_specgram(original_speech, sample_rate, title="Original")

# Apply RIR

speech_ = torch.nn.functional.pad(original_speech, (RIR.shape[1] - 1, 0))

rir_applied = torch.nn.functional.conv1d(speech_[None, ...], RIR[None, ...])[0]

plot_specgram(rir_applied, sample_rate, title="RIR Applied")

# Add background noise

# Because the noise is recorded in the actual environment, we consider that

# the noise contains the acoustic feature of the environment. Therefore, we add

# the noise after RIR application.

noise, _ = torchaudio.load(SAMPLE_NOISE)

noise = noise[:, : rir_applied.shape[1]]

snr_db = 8

scale = math.exp(snr_db / 10) * noise.norm(p=2) / rir_applied.norm(p=2)

bg_added = (scale * rir_applied + noise) / 2

plot_specgram(bg_added, sample_rate, title="BG noise added")

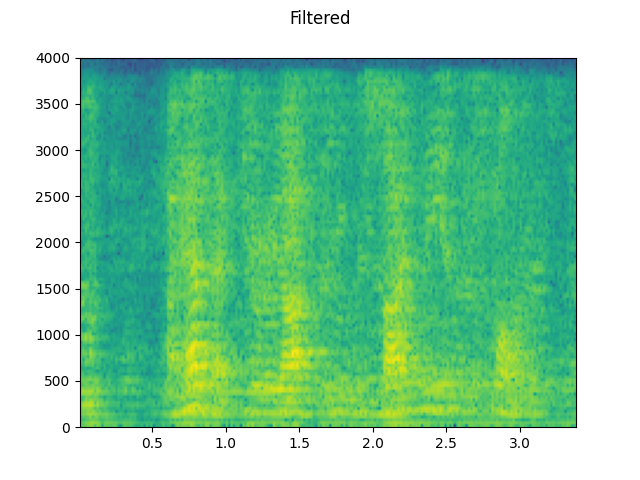

# Apply filtering and change sample rate

filtered, sample_rate2 = torchaudio.sox_effects.apply_effects_tensor(

bg_added,

sample_rate,

effects=[

["lowpass", "4000"],

[

"compand",

"0.02,0.05",

"-60,-60,-30,-10,-20,-8,-5,-8,-2,-8",

"-8",

"-7",

"0.05",

],

["rate", "8000"],

],

)

plot_specgram(filtered, sample_rate2, title="Filtered")

# Apply telephony codec

codec_applied = F.apply_codec(filtered, sample_rate2, format="gsm")

plot_specgram(codec_applied, sample_rate2, title="GSM Codec Applied")

![SNR: 20 [dB]](https://pytorch.org/audio/0.12.0/_images/sphx_glr_audio_data_augmentation_tutorial_014.png)

![SNR: 20 [dB]](https://pytorch.org/audio/0.12.0/_images/sphx_glr_audio_data_augmentation_tutorial_015.png)

![SNR: 10 [dB]](https://pytorch.org/audio/0.12.0/_images/sphx_glr_audio_data_augmentation_tutorial_016.png)

![SNR: 10 [dB]](https://pytorch.org/audio/0.12.0/_images/sphx_glr_audio_data_augmentation_tutorial_017.png)

![SNR: 3 [dB]](https://pytorch.org/audio/0.12.0/_images/sphx_glr_audio_data_augmentation_tutorial_018.png)

![SNR: 3 [dB]](https://pytorch.org/audio/0.12.0/_images/sphx_glr_audio_data_augmentation_tutorial_019.png)